The Ozempic Problem: The Generative AI Norms Forming in Silence, and How Youth and Adults Can Shape Them

Key Points

-

Schools should not rely only on policies or literacy frameworks; they need structures that help students and adults make meaning together.

-

When students participate in sensing, reflecting, and designing nudges, schools are more likely to build trust, transparency, and healthier classroom norms.

By: Shereen El Mallah, Jenny Poon, and youth authors Alex Matthew, and Adora Olise

This year, Ms. Chen started using AI to provide feedback on student writing. It saved her two hours a week and ensured that the last essay in the stack received the same quality of feedback as the first. She vetted every word, but she never told her students. There was no school policy requiring disclosure, and truthfully, she couldn’t quite define where her voice ended and the algorithm’s began.

When Priya received the latest comments on her essay, something felt off. The feedback lacked Ms. Chen’s characteristic blend of honesty and warmth that had always left Priya feeling seen.The next time around, Priya did not submit her draft early for feedback. She never brought it up, but something between them shifted.

This quiet rupture is increasingly common as AI spreads into classrooms. Ms. Chen isn’t cutting corners; she’s managing an impossible workload. Priya isn’t oversensitive; she’s a developing writer for whom that human connection is part of the learning. Both realities are true, and across classrooms everywhere, the relational ground between and among students and teachers is changing.

But rupture is not inevitable. The alternate path begins with naming what’s happening.

“The Cringe List”

Young people identify moments where AI use feels disingenuous:

When the AI aesthetic announces itself through a very specific combination of emojis (a checkmark here, a lightbulb there) and a series of em-dashes.

When the syllabus includes a bolded paragraph warning about the strict no-AI policy, and the assignment prompts beneath it read like they were written by the same tool the policy just banned.

When you are in the middle of an application and don’t know if other applicants are using AI to write their materials or if AI will be reading yours.

When the feedback arrives from someone who knows you, and reads like it was written by someone who has never met you.

When the recommendation letter is “personalized” with details so generic they could describe literally anyone.

Naming the Problem

Consider an analogy shared by Fitz, a 21-year-old college student. Among his friends, Fitz has become the designated “planner,” known for spring break itineraries that never disappoint. This year, he used AI to surface hidden-gem spots and weave in personalized touches. His friends declared it as his best one yet.

While battling it out in their first ever archery dodgeball game, a friend asked, “How did you come up with this? Did you use ChatGPT?” Fitz immediately said no. It was a reflex, not a decision. He then spent the rest of the week trying to figure out the rationale behind this split-second response, especially for someone who prides himself on honesty.

What he landed on was this: “AI is like Ozempic.” Not the drug, but the conversation (or lack thereof) around it. When someone announces a new diet or a gym routine, we call it discipline. When someone uses a GLP-1 to achieve the same outcome, the response is categorically different: more complicated, more loaded, carrying an implicit question about what really counts as effort. When a tool dramatically simplifies a process, the validity of the outcome is questioned.

That is the grey zone Fitz found himself in. He had used AI to plan a better trip for his friends, and his instinct was to hide it. Not because he thought he had done anything wrong, but because the norms for whether it is socially acceptable to use AI for trip planning with friend groups simply do not exist yet.

Unlike the Ozempic debate, however, the goal isn’t to take a side on AI. It is to bring young people into the conversation about how it gets used so they are not left to navigate it alone in the silence.

Why Silence is Costly

Left long enough, that silence becomes its own kind of norm. And for adolescents, the stakes are higher. Their identity is still under construction, assembled through social comparison and peer feedback. Young people are acutely attuned to how their choices are perceived and deeply invested in what those choices signal about who they are. A norm vacuum in that context produces persistent anxiety that comes from never quite knowing whether what you just did was acceptable, impressive, or something to hide.

For young people, AI is not a tool, it’s a social disruptor. It is also, notably, not the first technology to arrive this way.

As Babak Mostaghimi notes, we’ve been here before. In 2012, young people faced a different normative crisis with the mass adoption of smartphones and social media. Adults prioritized bans over guidance, and platform developers filled the norms vacuum with social behaviors designed to boost engagement (endless doomscrolling, signaling kinship with “likes,” expecting immediate responses to direct messages). Developers financially benefitted and youth mental health continues to pay the price.

Today, similarly harmful norms are now taking shape around the use of generative AI, occurring largely outside adult awareness. Some educators and organizations have already recognized this. The Harvard Center for Digital Thriving has developed classroom resources to help youth ground in their values and navigate grey areas. The Rithm Project created a card game that people are using to spark conversations about AI and human connection. These efforts point toward the importance of creating space for young people to make sense of AI on their own terms.

But for a culture shift to take hold, new norms must spread peer to peer through the social fabric of young people’s actual lives.

What a Different Approach Looks Like

Successfully shifting youth culture requires a fundamental shift in orientation that most institutions are not currently set up for. Too often, we treat AI adoption as a technical challenge, defaulting to AI literacy frameworks and handbooks.

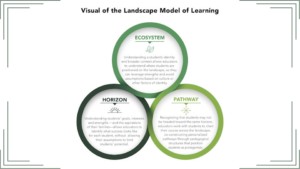

But shifting culture is a complex, relational challenge that evolves by sensing what is actually happening, reflecting on what it means, and nudging from the inside out.

Sensing: knowing what is actually happening

The first practical step is building the infrastructure to hear from young people routinely, honestly, and at scale. Hearing from young people and actually hearing them, however, are not the same thing. Surveys can tell you what is happening, but they cannot tell you why, or what it means to the person experiencing it.

Tools like SenseMaker address this directly by asking young people to share real anecdotes about their AI experience (a time they used it, avoided it, or felt conflicted about it). Follow-up questions ask them to interpret that experience (what it meant, how it felt, whether it was typical). What emerges is a clearer picture of the harder to reach social norms operating beneath the data (Box 2). CIE is already using SenseMaker to study culture shifts in contexts ranging from cell phones in schools to workforce development. Its application to surfacing below-the-surface norms in the real-time context of AI adoption is a natural extension.

Sensing-Reflecting-Nudging in a school district

Hollywood Arts School District realizes their AI adoption policy isn’t making an impact and decides to let students lead. They form an “AI Culture Team” run by the Student Leadership Board. Together with teachers and a research partner, the team co-designs an anonymous story-sharing app powered by SenseMaker. Instead of a survey, the app invites students and staff to share “micro-stories” (quick, 2-minute descriptions of a moment where they used or chose not to use AI). After sharing, the user moves a few interactive sliders to explain what the micro-story means and what might be happening beneath the surface. Slider questions are drawn from examples provided by the research partners and customized by the team.

Over the next few months, the team promotes the app, encouraging students and staff to use it during homeroom (on their Chromebooks), at lunch, or after school. People spend 5-10 minutes completing it. Some use it once; others more often. As stories come in, a live dashboard immediately updates with patterns and “heat maps” representing the district’s AI culture.

Mid-year, the team reviews the dashboard noting patterns, meanings, and missing voices. The district hosts a “data party” where students and staff collectively interpret what the data says about AI social norms and what to do about it.

Based on these insights, the team designs a “nudge” (a small, low-stakes experiment meant to influence people toward more beneficial norms). For example, if the data had revealed that students were nervous about disclosing AI use in their writing, the experiment might be developing a routine and handout with the English department for sharing prompt-history as part of the creative writing process.

For the rest of the year, the app remains live, collecting stories to monitor how the culture responds. Toward the end of the year, the team reviews the dashboard again to see if stories have shifted the way they had hoped. If so, they share positive examples to reinforce the culture shift. If not, they design a different nudge and try again.

Reflecting: making meaning together

Sensing is only valuable if it leads somewhere. The second step is sharing what was heard, naming the patterns, and asking young people to weigh in on what it means and what it calls for. In practice, an intergenerational team reviews SenseMaker patterns twice a year, identifying what is moving in a healthy direction (increasing openness about AI use) and what is moving in a harmful one (concealment becoming the default). They name it in their own language, propose what to amplify and what to interrupt, and help design the nudge.

This is not a focus group or a rubber-stamp committee. It is genuine co-construction, which requires adults to show up with humility and a willingness to be surprised.

5 Questions Adults Should Ask To Start a Conversation About AI in the Classroom

Curated by youth based on their firsthand experiences.

- What is something you worry you will lose (in your thinking, your relationships, or your sense of self) if you rely on AI too much?

- If I used AI to help with your feedback or grading, what is the one thing you would want to make sure stays human?

- Is there a way you use AI for your schoolwork that feels acceptable to do but unclear whether to disclose?

- What is the biggest gap between how adults think you use AI and how you actually use it?

- If you were designing the AI policy for this class, what would you prioritize, include, or remove?

Nudging: shifting the culture from the inside

Sensing and reflecting create the conditions for the third and final step: the nudge. A nudge is a small, well-timed intervention designed to shift a norm in a specific direction.

Nudges resonate more when the people being nudged help build them. Consider the trajectory of youth anti-smoking campaigns. For years, they relied on scare tactics and grim PSAs that fell flat with teens who valued immediate social currency over long-term health. In 1998, Florida’s Truth Campaign abandoned the lecture and asked teens directly why they smoke. They uncovered an important insight: smoking wasn’t a result of ignorance, but a deliberate way to satisfy a developmental need for autonomy. By reframing Big Tobacco as the manipulative authority, the campaign flipped the script and turned the refusal of a cigarette into a defiant act of rebellion. Teen smoking dropped from 28% to less than 6%.

This same logic applies to the social currency of AI disclosure. Right now, admitting AI use carries the social risk of being seen as less capable, less creative, or less genuine. But we can move the needle by engaging young people directly. A well-designed nudge reframes disclosure from a moment of “getting caught” into an act of ownership.

Now reimagine Ms. Chen’s classroom after a few cycles of sensing and reflecting. They arrive at a core truth: submitting work is an act of trust in a specific person to provide feedback that honors the writer. They co-author a nudge: a new guideline that essays will be peer-reviewed first to ensure each one has a human audience, then given AI-assisted feedback from Ms. Chen (openly acknowledged and carefully curated). They continue to sense, reflect, and nudge as the new norm plays out to ensure it feels beneficial to all.

This agreement will not appear in any handbook. New norms cannot be mandated or scaled from above. As with any shift in culture, it ripples outward as those who experience it firsthand engage in collective meaning-making and choose to carry it forward.

About the Authors

This blog was coauthored by authors Shereen El Mallah and Jenny Poon, and youth authors Alex Matthew and Adora Olise. Youth contributors included Mihir Sedimbi and Fitz Awiakta.

AI disclosure: The authors wrote an initial blog outline and asked Gemini to act as an editor, tightening our arguments while retaining the overall flow and main points of the original. Working from the revised outline, the authors drafted the post without AI (examples, emdashes and all), then asked Gemini and/or Claude copyedit, improve transitions, sharpen clarity, and reduce word count.

0 Comments

Leave a Comment

Your email address will not be published. All fields are required.