A Proposal for Better Growth Measures

Taking full advantage of personal digital learning requires the ability to compare measures of academic progress in different blended school settings. As noted two weeks ago, it’s time for the next big advance in digital learning: comparable growth measures.

There are thousands of students using adaptive instructional systems every week. Millions of students engage daily with digital content that has embedded assessments. Most U.S. students will join them in the next two school years. The bad news is that, in most schools, the data is trapped in the individual applications–you may get a zippy chart but there’s not much you can do with it. There is no easy way to combine data from multiple apps with teacher observations to create a composite reporting and competency-tracking system.

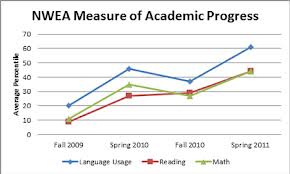

Thousands of schools use pre- and post-adaptive assessments to monitor student growth rates, but these growth rates often don’t match state estimates based on end-of-year grade level tests.

And, by all indications, states will continue to give large scale end-of-year grade level exams through the end of the decade despite the fact that schools will have thousands of data points on the progress of each student well before they sit down to take the test.

We could change all of this by developing a system of comparable growth rates. It would require that an independent scale and a method of describing growth on that scale be adopted. There would also need to be a simple mechanism for conducting linking studies.

A proposal. Given that 20 states and most test publishers (CTB, ETS, NWEA, Measured Progress, Pearson, Scholastic, Scantron, Cambium-Voyager) currently report student reading ability on the Lexile scale from MetaMetrics, it seems like a logical choice for a common reference point. In addition to test publishers, all the major text and trade book publishers have elected to use Lexile measures as a way to describe text complexity. Instructional companies are building intervention and instructional programs around Lexile measures. For example, Scholastic Reading Inventory and Curriculum Associates’ i-Ready have also linked their assessments with the Lexile Framework illustrating the practicality of a common scale.

Add their newer Quantile scale for math (which testmakers are beginning to link to) and we’ve got a common reporting standard before the end of 2013 rather than waiting for the consortia to figure this out in 2015-16.

Not surprising, when I talked to Malbert Smith and Ellie Sanford-Moore of MetaMetrics, they thought using the Lexile/Quantile scales as common scales was a brilliant idea. Smith said a common scale would unlock “a storehouse of value.” With the ubiquity of Lexile- or Quantile-denominated tests and instructional and digital resources on the same scales, differentiated instruction is truly possible.

There are several measurement characteristics are required to optimally measure student growth. “For the best measurement of student growth, the measurement scale must be: unidimensional, continuous, equal-interval, developmental, and invariant with respect to location and unit size,” as Metametrics’ Gary Williamson discussed at NCME this year. “In fact, the Lexile scale possesses all of these necessary characteristics. So it is not only appropriate for the measurement of student growth, it may well be the most appropriate scale for the measurement of academic growth in reading.”

This new vision and reality is rapidly taking shape in North Carolina. For years, North Carolina has been reporting student Lexile and Quantile measures off of their grade 3-8 assessment and their end-of-course assessments, and recently collaborated with ACT and MetaMetrics to link the ACT with the Lexile and Quantile Frameworks. This fall the K-2 test they are using to monitor reading progress (DIBELS) will be linked as well. So next year, parents and teachers will be able log in to HomeBase and see growth trajectories to determine if a student is on target to for college and careers. “These growth calculators are like retirement planners,” said Smith, “They show students and parents how to bend the curve so you can achieve your goals.”

North Carolina is a great example of productive use of summative measures–just think of the potential of instantaneously mining the instructional experience of every student and updating growth trajectories drawn from multiple real-time sources.

Psychometricians like to have year-end tests built on 50 items or more for validity. Imagine the precision available from measuring 50 items per day embedded in learning experiences.

Another advantage of Lexile, noted by Bellwether’s Andy Rotherham, is the ability to “link to real work applications–reading a DMV application, reading an editorial page–actual tasks rather than just a definition from on high of what a college and career ready standard looks like. Parents really want that and will appreciate it.” Rotherham, who supports the goals of the two state assessment consortia, stressed that a common scale is “key to a real expansion of competency-based and other approaches, it’s the kind of unifying idea that can allow more pluralism in delivery.”

Rotherham’s colleague Andy Smarick noted that it’s likely that at least a few more states will leave the consortia and that an independent scale provides an useful backstop.

Some experts have expressed concerns that Lexile measures are not accurate enough, but a recent Journal of Applied Measurement article indicates that the Lexile scale shares the same reliability as other measures of reading ability with the major source of unreliability being the measurement of the students (not the measurement of the text).

Better measures of individual student growth in reading and math are key to better instruction, easier comparison of instructional tools, and management of competency-based environments. Better growth measures are key to understanding what’s working and what’s not in online and blended environments–it’s key to next-generation accountability systems.

We can wait two or three years and let the consortia try to figure this out or we can use a widely adopted scale that is already embedded into the English Language Arts standards and a scale for math achievement that is used by many states and publishers and expand the ability to draw common inferences about growth in student learning.

Disclosures: Curriculum Associates and Pearson are Getting Smart Advocacy Partners

0 Comments

Leave a Comment

Your email address will not be published. All fields are required.