What Bloom’s Taxonomy Can Teach Us About AI

Key Points

-

The most important potential of AI isn’t to enhance human productivity, it’s to enhance and support human thinking.

-

Looking at AI’s capabilities through the lens of Bloom’s Taxonomy showcases the possible interplay of humans and machines.

Recently, I keynoted at the California City School Superintendents (CCSS) Fall Conference about the future of learning with AI. Even before I got there, these capable leaders were learning about AI from several axes and diverse stakeholders. They were using their previous experiences with social media to forecast what might happen with AI. They were carefully balancing the politics between their communities, their boards, their local government agencies, their parents, their staff, and their students. They were crafting policies and implementation plans.

Often, they were doing this work with little cognitive and emotional support.

Dr. Carmen Garcia, president of CCSS, Superintendent of Morgan Hill Unified School District and an incredibly thoughtful and kind leader, welcomed the group with one sentiment; “being a superintendent is lonely.” No matter how big your team is, the high-pressure, highly-public, and highly responsible role of superintendent has little room for mistakes.

In the education world, we’ve seen the ways educators can use AI to produce lesson plans, quizzes, and report cards. But I would argue the most important potential of AI isn’t to enhance human productivity. It’s to enhance and support human thinking.

So at CCSS, I chose to prepare our Superintendents to use AI as the thought partner they’ve always wanted, in a world where leading is a lonely job.

This 2-part article is about AI’s cognitive abilities as a thought partner.

The first part differentiates the competencies of AI from humans. It identifies what humans can double down on as their unique advantage, while also identifying a new standard for quality of thought using AI.

The second part (coming next week) provides ideas for how leaders can train an AI thought partner to represent whoever they want – a critic, a twin, a mentor, a philosopher, or a guide.

The Cognitive Dance of AI

In the last year, we’ve seen a rapid improvement in the abilities of generative AI. It can take millions of pieces of data and reconfigure them into billions of pieces of content. However, shortcomings with data validity, misinformation, and algorithmic bias have deterred some educators from considering it a reliable tool.

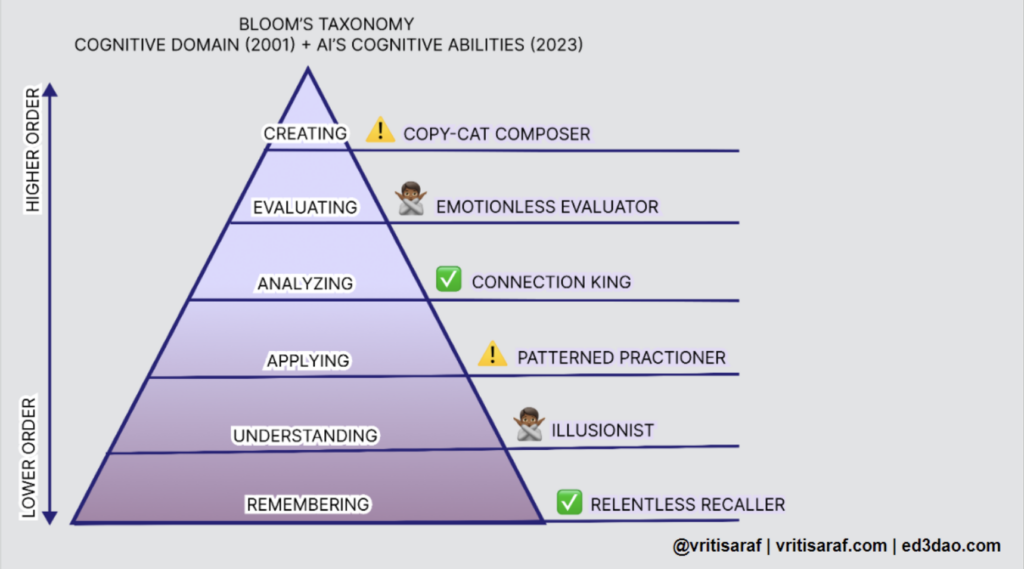

When writing my keynote, I wondered if understanding AI’s cognitive abilities could help advocate for its utility. A familiar framework came to mind: Bloom’s Taxonomy.

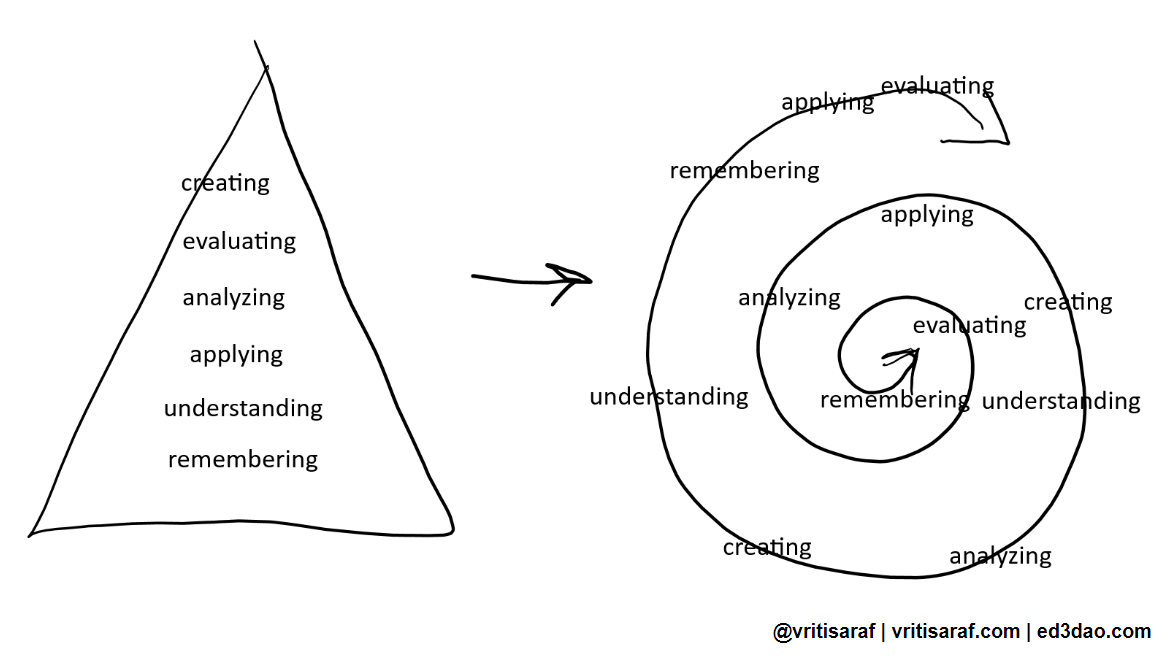

When I was a teacher, Bloom’s played an important role in lesson planning and assessing the competencies of my learners. Recent critics have appropriately recognized that these cognitive levels shouldn’t be stacked linearly, but should be more of a spiral that volleys between levels as learning is happening. Either way, it’s been the most accessible representation of learning in the last 70 years.

I thought that mapping AI’s abilities to Bloom’s Taxonomy would group at the top, bottom, or even perhaps swallow all of Bloom’s. In reality, it was much more spotty and varied, revealing a keen representation of human and robot capabilities.

Mapping AI to Bloom’s

Here’s my evaluation. Remember that the purpose was to set our superintendents up for understanding when and how AI is most powerful. As you read this, keep in mind how you’ve been thinking about AI.

Remembering: The Relentless Recaller

- Bloom’s Level: Remembering

- AI’s abilities: Highly competent.

- Key actions: Retrieving information such as facts, dates, definitions, or answers.

How well does AI recall data or information?

This first one is obvious. AI can simultaneously access millions of pieces of information across large databases. It will always be able to retrieve data more quickly, accurately, and with more abundance, than humans ever will.

Understanding: The Illusionist

- Bloom’s Level: Understanding

- AI’s abilities: Not competent.

- Key actions: Recognizing, discussing, or explaining the meaning behind information.

How well does AI make meaning of information?

When I evaluated this level, I didn’t expect AI to fail so soon on Bloom’s. AI can recognize patterns, categorize data, and extract pattern-based meaning from large datasets, but it doesn’t truly “understand” in the human sense. Its comprehension is based on patterns and data, not on consciousness or intuition.

During my keynote at CCSS, the very thoughtful leader Dr. Tom McCoy, Superintendent at Oxnard Union HS District, chimed in with an incredible example. He explained how his son, when completing a homework assignment that asked him to write a goodbye letter to racism, used ChatGPT for ideas. ChatGPT replied with an opening line to the letter: “Dear Racism, We’ve had such great times in the past…”. AI used pattern recognition to identify how great letters hook the reader but didn’t make meaning of the purpose of the letter and the weight of racism. AI did not understand the assignment.

AI possesses an uncanny ability to generate responses that, at face value, seem informed and profound. This is because it excels in pattern-matching, recognizing and mimicking structures, sequences, and commonalities within data. But it’s not making meaning.

Applying: The Patterned Practitioner

- Bloom’s Level: Applying

- AI’s abilities: Somewhat competent.

- Key actions: Using information in new contexts to predict, interpret, solve for, execute, or implement.

How well does AI use information in new situations?

AI, especially machine learning models, excels in applying learned patterns to new data. At the heart of AI’s application skills is a concept called “transfer learning”, which enables an AI model trained on one task to be repurposed for a second related task without starting from scratch. This is akin to a human leveraging their knowledge of cycling to quickly learn motorcycle riding.

However, humans possess an innate ability to make intuitive leaps. If faced with an unfamiliar problem, we draw from our varied experiences, even if they seem unrelated, to find solutions. AI, on the other hand, relies heavily on patterns it has seen. It struggles in scenarios where data is sparse or where intuitive, out-of-the-box thinking is required.

So the effectiveness of AI at this bloom’s level is somewhat competent and really depends on the data it has along with the complexity of the problem.

Analyzing: The Connection King

- Bloom’s Level: Analyzing

- AI’s abilities: Highly competent.

- Key actions: Identifying trends, differentiating, comparing, relating, and questioning.

How well does AI draw connections among ideas?

Traditionally, Bloom’s illustrates that if a student isn’t able to remember, understand or apply, they probably won’t be able to move up on the taxonomy. But seeing AI fail at the lower levels and excel at this one further helps to make the case for Bloom’s Taxonomy as a spiral construct, not a linear progression.

AI can analyze vast and multidimensional datasets with superhuman speed, identifying subtle patterns and relationships. For instance, in genetics, AI tools can sift through enormous genomic data to spot potential markers or mutations linked to diseases. AI can predict potential future patterns based on historical data, which makes it highly competent at this level.

Evaluating: The Emotionless Evaluator

- Bloom’s Level: Evaluating

- AI’s abilities: Minimally competent

- Key actions: Making a judgment, critiquing, depending, or providing an informed opinion.

How well does AI make judgments?

The act of evaluation is not merely about decision-making based on data; it is a complex cognitive process that often demands judgment, ethics, and contextual understanding. AI falls apart at this level. It does not operate with ethical judgment, it does not have cultural nuance, and it certainly does not have emotions. It over-relies on quantifiable metrics and although this perspective is important and can be used to evaluate our own blindspots, it is not the full picture.

We know that the instinct-based decisions leaders need to make in difficult situations are sometimes the best decisions. Steve Jobs is famously known for using his instinct to launch the iPad when tablets were failing in the market.

This level is where humans can shine and have a serious advantage over the machine. I gave this one a “minimally competent” because although AI cannot make judgments, it can provide us with the right information and recommendations so we can make judgments.

Creating: The Copy-Cat Composer

- Bloom’s Level: Creating

- AI’s abilities: Somewhat competent

- Key actions: Producing, designing, assembling, constructing, formulating.

How well does AI produce new or original work?

AI can create new content by merging patterns it has observed, but it isn’t original. It doesn’t have original thoughts, emotions, or consciousness. Even when AI creates music, artwork, or narratives, it does so by identifying and combining patterns in its training data. The result may sound or look unique to our ears or eyes, especially when the AI blends seemingly disparate styles. But at its core, AI is not inventing; it’s remixing.

And because of this, AI’s creative capacity is tethered to data. It cannot make the cognitive leaps across variable experiences even if the sheer vastness of combinations it generates seems groundbreaking. The permutations are just regurgitations in many forms.

Human creativity often springs from emotions, personal experiences, cultural contexts, and epiphanies. It’s organic, nuanced, risky, and sometimes serendipitous and unintuitive. These elements are currently beyond AI’s grasp. So although AI is highly competent at creating remixed content, it is not competent at creating original content.

How Learning Blooms

Mapping AI on Bloom’s taxonomy opened several cognitive and presentation pathways for me.

- It helped me explain the human advantage over AI

- It depicted AI as a cognitive partner

- It identified the ways learners might use AI and be duped by AI

- It allowed me to narrate how AI will elevate our standards in education for the production of content, ideas, and discourse

This last point is particularly important. One of the superintendents mentioned that using AI feels like cheating. She didn’t want people to think her thoughts and her work were not her own. That made perfect sense to me and it was difficult to justify AI’s IP leaching algorithm.

Instead, I shared that the calculator gave us the shortcuts we needed for quick and generic mathematics, but what we put in the calculator — how we used and contextualized the answer, and how we reasoned through the validity of the response — is what made the output our own. The use of the calculator also enabled educators to level up their expectations for students. Getting an answer was no longer the sole outcome. Now, students had to show their work and reason through more difficult questions.

Although a simplistic analogy, AI will similarly create new standards of productivity for us. The more ubiquitous AI is, the more we will use it to produce higher-quality content. When everyone is using it, we’ll think of new ways to assess student competencies.

The next article in this two-part series will dive into how AI can be a cognitive partner to leaders. In the meantime, check out my newsletter for more thoughts on AI + Web3. Join our community at Ed3 DAO to continue the conversation and to access AI courses for educators.

0 Comments

Leave a Comment

Your email address will not be published. All fields are required.