Making Work-Based Learning Work: Georgia’s Novel Approach to WBL Data

Key Points

-

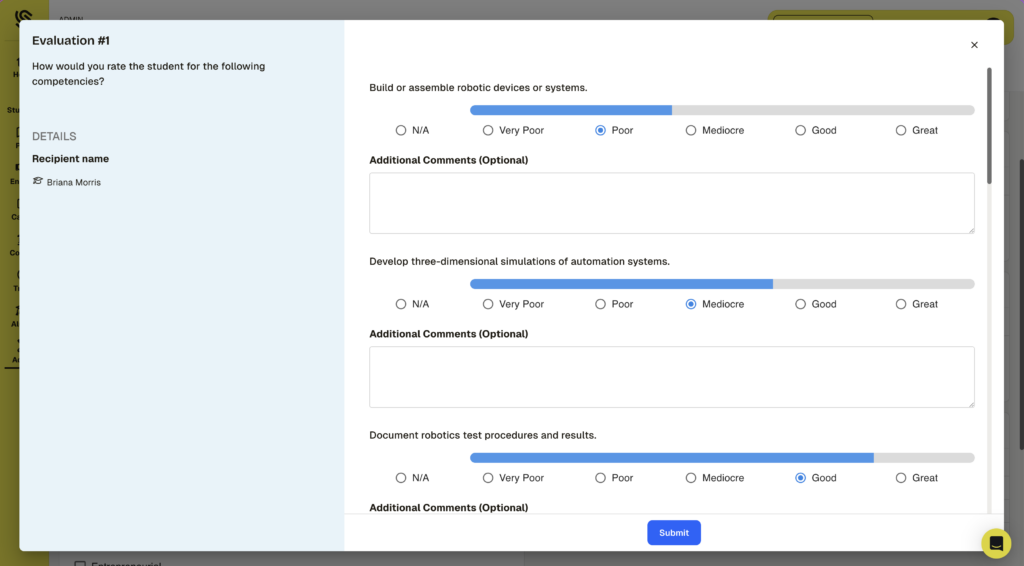

Quality WBL requires skill evidence, not just hours. Georgia’s mandated, repeated O*NET-aligned evaluations show a practical way to generate credible, comparable competency data across districts.

-

Design evaluation + credentialing together. A PDF completion certificate underuses the real value—turning employer-informed competency data into portable, verifiable digital credentials (LER-aligned) is the next lever for student opportunity.

Editor’s Note: In all platform images, student and employer names have been altered to preserve privacy.

While far better than nothing, work-based learning (WBL) has a credibility problem. A student completes an internship, clocks their hours, gets a line on their resume — and then what? Without rigorous, verifiable evidence of what skills were actually demonstrated on the job, work-based learning risks becoming just another box to check rather than a genuine launchpad into a career. It becomes a footnote to a transcript rather than a key demonstration of capability and real-world learning.

Georgia is trying to change that. And in doing so, the state has built one of the most instructive models in the country for what high-fidelity skill verification can actually look like at scale.

A State-Level Mandate That Created a Data Goldmine

Georgia’s Career, Technical, and Agricultural Education (CTAE) division within the Georgia Department of Education (GaDOE) made a deliberate policy choice: students in work-based learning programs would not just log hours. They would be evaluated — at least three times per year — against the O*NET competencies that correspond to their specific placement. To do this, they decided to work with SchooLinks, a leader in WBL tracking and college and career readiness.

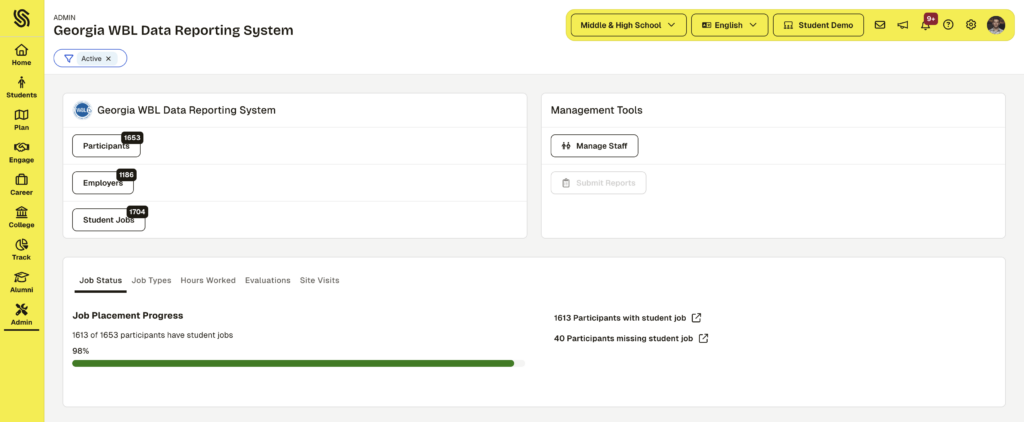

This mandate was the critical ingredient. Without it, data collection is voluntary and patchy. With it, something remarkable happened: 33,000 students have been evaluated, and nearly 270,000 skill evaluations have now been generated across the state’s work-based learning population.

Let that number sink in. 270,000 structured assessments of what students can actually do, tied to nationally recognized occupational competency frameworks. That is not anecdotal evidence of learning. That is a longitudinal, employer-informed record of skill development at scale.

This data and initiative are strategic at a state level, going beyond K-12 education. 38% of these students who have completed the evaluation process have assessments of at least one skill required by occupations on Georgia’s High-Demand Career List. According to the governor’s office, this list “identifies careers that are high-demand, high-wage, and high-skill to inform educational pathways for economic success and sustainable growth.”

From the first to the second evaluation of skills, the average rating of high-demand, career-aligned skills increased from 4.24 to 4.52 on a 5-point scale. In particular, students saw the largest increase in competency around digital and technical skills as they gained more hours of experience in their work-based learning roles.

How the Evaluation System Actually Works

The mechanics matter here because the system’s design makes the data trustworthy. Here is how the process unfolds:

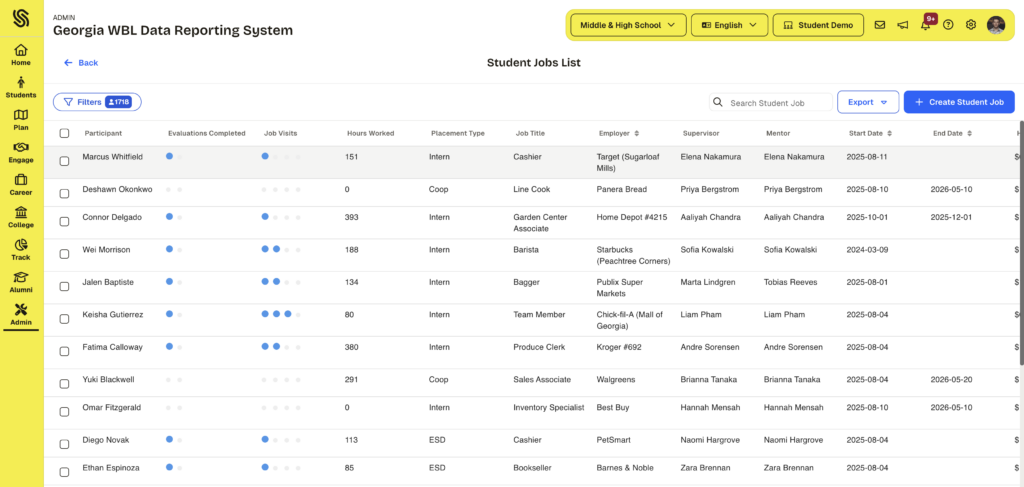

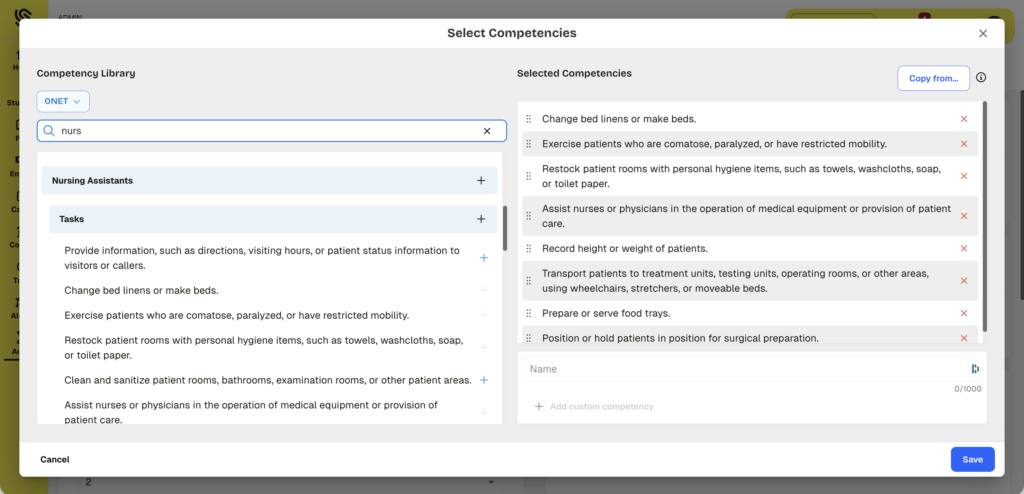

- Districts compile a list of competencies relevant to each work-based learning placement, drawing on established frameworks such as O*NET. A welding internship gets a welding competency set. A healthcare placement gets a healthcare competency set.

- Students are evaluated against those competencies on a quarterly basis, tied to the duration of their internship.

- Evaluations are completed by a staff member in consultation with the employer.

- The same competency list that was assembled at the start of the placement is what the evaluator sees and scores against. There is no drift between what was planned and what gets measured.

One of the most common failure modes in work-based learning data is that the competencies used for evaluation drift from the actual scope of work, or that evaluations happen informally and are never captured in a structured way. Georgia’s model closes both of those gaps.

The Next Frontier: From Data Collection to Digital Credentials

While this work is a big step toward high-fidelity work-based learning tracking, there is one key opportunity for further growth—portability and articulation of the experience.

In this first iteration, students who complete a youth apprenticeship receive a PDF certificate. It documents completion. It reflects hours logged. But it does not carry the richness of the underlying evaluation data — the 270,000 competency-level assessments that actually tell the story of what a student learned and demonstrated on the job. And perhaps, more critically, the PDF is not nearly as portable and machine-readable as modern credentialing standards.

This issue points to the larger challenge facing work-based learning programs across the country: data collection and credential issuance are often funded and procured separately, and sometimes never connected at all. The result is that the most meaningful evidence of a student’s skills — employer-validated, competency-mapped, longitudinal — lives in a reporting portal that students themselves may never be able to access or share.

The natural next step for Georgia, and for all programs committed to true skill verification, is to close the loop between evaluation and credentialing. That means:

- Converting the competency evaluation data into a verifiable digital credential that students can carry in a wallet.

- Making that credential portable, so an employer or postsecondary institution can actually see what skills were demonstrated, not just that an internship was completed.

- Giving students agency over their own records — the ability to manage their placement data themselves, rather than having it exist only in a district-administered reporting portal.

None of this is technically out of reach. The infrastructure exists, in fact, it exists within SchooLinks. Georgia’s next step matters in turning data into something that can be used for students’ future job applications and applications to higher ed or additional training opportunities.

What Other States and Programs Can Learn

Georgia’s model offers a clear set of lessons for anyone building or scaling a work-based learning program:

- Interoperability as a foundation, using Learner and Employment Record (LER) compliant standards like Open Badges 3.0 and representing competencies and learning standards in CASE standards, drives consensus around a generalizable and portable representation of students’ work. GaDOE’s broader investment in Interoperable tooling, leveraging standards from 1EdTech and Ed-Fi, has laid the groundwork for the connected, cohesive system needed to make skills-based learning and hiring work at scale.

- Mandate creates volume. Voluntary reporting produces sparse, inconsistent data. When evaluation is required — and tied to a state reporting obligation — data quality and quantity both improve dramatically.

- Framework alignment matters. Tying evaluations to O*NET competencies gives the data meaning beyond the individual placement. It creates comparability across sites, districts, and programs.

- Employer involvement is the differentiator. Whether the evaluation is conducted by the employer, in consultation with them, or as a self-assessment, the employer’s lens is what makes the data credible to the next employer in the student’s career.

- Plan for articulation from the start. Data collection and credential issuance must be designed together. Retrofitting a credential onto an existing data system is possible, but it is harder and slower than building it from the beginning.

As discussed in our recent “Experience Matters” publication, work-based learning is not just a box to check. It is an iterative experience full of rich evidence of capability and durable skills. All stakeholders are putting in the work to design, attend and evaluate this experience—let’s make sure that we’re able to show it.

0 Comments

Leave a Comment

Your email address will not be published. All fields are required.