AI is the Cognitive Friend We’ve Always Wanted

Recently, I keynoted at the California City School Superintendents (CCSS) Fall Conference about the future of learning with AI. Even before I got there, these capable leaders were learning about AI from several axes and diverse stakeholders. They were using their previous experiences with social media to forecast what might happen with AI. They were carefully balancing the politics between their communities, their boards, their local government agencies, their parents, their staff, and their students. They were crafting policies and implementation plans.

And oftentimes, they were doing this work with little cognitive and emotional support.

Dr. Carmen Garcia, president of CCSS, Superintendent of Morgan Hill Unified School District and an incredibly thoughtful and kind leader, welcomed the group with one sentiment; “being a superintendent is lonely”. Because no matter how big your team is, the high-pressure, highly-public, and highly responsible role of superintendent has little room for mistakes.

In the education world, we’ve seen ad nauseam the ways educators can use AI to produce lesson plans, quizzes, and report cards. But I would argue, the most important potential of AI isn’t to enhance human productivity. It’s to enhance and support human thinking.

So at CCSS, I chose to prepare our Superintendents to use AI as the thought partner they’ve always wanted, in a world where leading is a lonely job.

This 2-part article is about AI’s cognitive abilities as a thought partner.

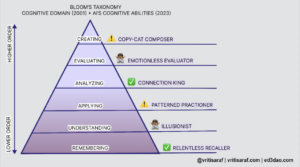

In my last piece of this series, I mapped AI’s capabilities to Bloom’s taxonomy, differentiating the competencies of AI from humans. My hope is that readers will see what humans can double down on as their unique advantage, while also identifying a new standard for quality of thought.

The second part provides ideas for how leaders can train an AI thought partner to represent whoever they want – a critic, a twin, a mentor, a philosopher, or a guide.

In my last piece of this series, I mapped AI’s capabilities to Bloom’s taxonomy where we learned that AI’s splotchy cognitive competencies can help us:

- explain the human advantage over AI

- depict AI as a cognitive partner

- identify ways learners might use AI and be duped by AI

- narrate how AI will elevate our standards in education for the production of content, ideas, and discourse

Now, we’ll identify how leaders can finally have the thought partner they’ve always wanted.

Leaders are often faced with complex decision-making. It isn’t easy to expect others in their ecosystem to be able to provide a full evaluation of the situation or the final decision, because the leader often has more information. Collaborative decision making is always an excellent strategy to involve more stakeholders, but that can also fail if the stakeholders are uninformed or the decision needs to be made quickly.

So in the moments when a leader needs to make a decision, help her collaborators make a decision, or evaluate a decision she made, who does she turn to?

Imagine if every leader had a personal coach who was critical when she needed feedback, a twin when she needed efficiency, and a philosopher when she needed inspiration. Imagine that this guide knew everything about the leader, her ecosystem, her stakeholders, and her problems.

During my keynote at CCSS, the thoughtful Dr. César Morales, Ventura County Superintendent, said he had a lightbulb moment at this point. Although he didn’t feel comfortable producing content on ChatGPT, he realized he could have it critique his work. And that completely changed his perspective on AI.

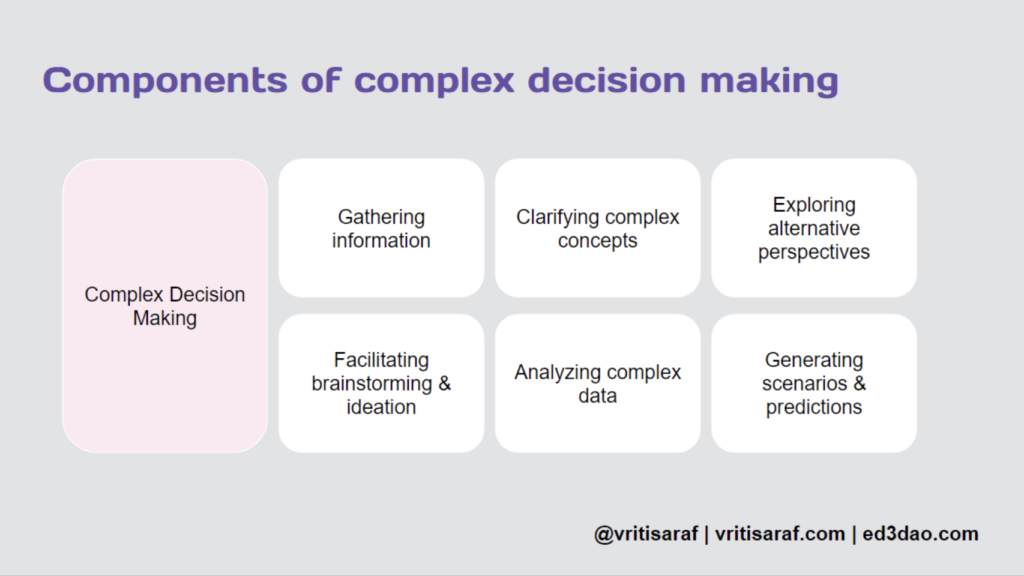

Breaking Down Complex Decision Making

So how do we do this? There are ways to literally create a digital twin using AI. In fact, my friend Bodo built two with his kids using my friend Dima’s AI platform. But let’s consider ChatGPT as our main tool.

Let’s start by breaking down complex decision making.

To make a difficult decision (or write a letter to the board, advocate for a staff member, produce a business report, etc. etc.), leaders have to gather and analyze the appropriate information from various sources first. We can equate this to the “empathy” stage of design thinking. Without analyzing information from all sides, it’s impossible to conceive a wise decision or prioritize the components of the decision.

As leaders brainstorm a solution to their problem, they should explore alternative perspectives and generate scenarios that assess the risk, trade-offs, and predict the response. If leaders are not considering what could happen if this decision were made, they may run into bigger problems.

These components work much like Bloom’s in that they’re more of a spiral that volley back and forth between each other. In sum, complex decision making is made up of gathering information, clarifying complex concepts, exploring alternative perspectives, facilitating brainstorming, analyzing data, and generating scenarios and predictions.

But the reality is that leaders don’t always have time or the skill to make these levels of assessments before they execute.

Enter, AI.

In addition to asking AI to brainstorm the decision for us, we can ask AI to analyze the decision we may want to make. Remember that AI cannot make meaning so humans must always make their own judgments. Here are my go-to questions for complex decisions.

These questions allow teams to quickly iterate and adapt their decisions before executing. They allow us to simulate outcomes and consider alternatives we may never have thought of. And most importantly, they equip us with strategies to improve our thinking that we can potentially learn from for future decisions.

This, of course, is my main thesis across these articles: AI can help us become better thinkers.

Context Setting

To set up a cognitive friend on ChatGPT, we first need to set clear context for our ecosystem using the four Ps, before you even ask my go-to questions.

Place: Tell AI what makes up your ecosystem from the size of the organization to the history it’s had.

People: Describe who your stakeholders are and be as detailed as possible. Try introducing a few personas that your decision impacts.

Purpose: Identify the goals and objectives of your organization, your own professional goals in your leadership role, and any KPIs that might be relevant to the short or long term.

Problems: Explain the obstacles your organization has had over the last few years. Explain what your team has been struggling with.

By asking ChatGPT to remember these things, every new piece of information will build upon the last.

To set up a critic, add the prompt: “You are an expert in complex systems thinking, conflict-resolution, and design thinking. You are also my critical yet supportive thought partner who helps me see beyond my blindspots.”

To set up a philosopher: “You are an expert in philosophy, regenerative ecosystems, and moral theory. You are also my critical yet supportive thought partner who helps me see beyond my blindspots.”

…you get the idea. Following this, present your draft solution to AI and then ask the aforementioned go-to questions.

There are oodles of prompt engineering resources out there that will show you how to increase the reliability of responses. Our Ed3 DAO community member Brian Piper recently identified prompts he’s used. Please choose your own adventure.

The main goal with setting up a cognitive thought partner is to improve your thinking, not just the production of content. If used correctly, leaders having a thought partner who knows them can be game changing.

Grasping our Self-Governance

Technology will outpace our ability to keep up with it. Expanding datasets and neural links will likely help AI get “smarter”. But if we want to stand a chance against the machine, we must retain our self-governance, AKA our ability to own our decisions and data. We need to continually evolve our cognitive abilities and explicitly recognize the nuances only humans know, from politics to pedagogy.

I’m grateful to the folks at CCSS for inviting me to share my ideas with them and commend their continued leadership across their school districts, despite how lonely leading can be.

Check out my newsletter for more thoughts on AI + Web3 and my website, www.vritisaraf.com. Join our community at Ed3 DAO to continue the conversation and to access AI courses for educators.

Susmita l Talkingbees-Online Bengali Tutor

I found this blog to be incredibly intriguing! It provides essential information for school administrators, bridging the gap between community, teachers, students, and local government officials. In the second part of the blog, you delve into the cognitive capabilities of AI, which can serve as an invaluable companion. It has the potential to revolutionize education by establishing new standards and assisting students in achieving excellence. When leaders face challenging decisions, an AI companion can offer relevant insights. You might consider creating a related AI companion—one that fosters creativity and critical thinking skills. Such a companion could contribute significantly to enhancing educational quality.