3 Signs of Quality You Should Look For In Instructional Content

The future will bring amazingly better instructional content for teacher and student use. If the market notices key signs of this, then more effective, comprehensive content will be broadly and rapidly adopted, to the benefit of teaching and learning. I believe there’s three signs the market should be looking for.

My optimism is based on a decade inside a digital content developer and publisher, focused on K-5 math, deployed in a blended in-school “rotation” model, and representing how math works with visual, dynamic interactive puzzles. You might think that developing early math content, like say place value, would be easy — but I can confirm that making high-quality, effective digital content for this “easy” subject is very, very hard. Most of what’s historically been out there just doesn’t cut it. But high-quality content is doable and more is coming.

So how can you tell what’s quality? Rather than trying to drill into intrinsic content and instructional design attributes, I’m going to pull way back and suggest an operational definition: What events in the market indicate high quality instructional content?

Here then are three signs to watch for:

1. Results show up on state standardized assessments.

That is to say, teachers and students using the content in question outperform “business as usual.” Quality, effective content, with a feasible implementation process, that facilitates teachers to radically improve student learning will produce positive results, no matter what assessment is used. And the deeper the assessment, the better this signal is. I certainly hope the Common Core assessment consortia manage to go deeper as envisioned.

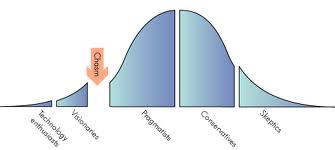

In other words, for high-quality content there will be no need to shy away from state assessment yardsticks — to the contrary, success on those high stakes measures is a pre-requisite to broad, rapid scale-up in schools. Nothing that fails to move the needle on state assessments is going to cross the chasm into the main market. Note that to “take credit” for affecting end-of-course assessments, the content syllabus will have to cover a whole course or grade. Small stand-alone learning objects, like individual apps, can’t expect to move the needle on summative assessments.

2. Results show up repeatedly at scale starting in year one of implementation.

Large-scale and district-wide deployments at 10 or more sites will in aggregate show significant and positive test results. This means that on average, results are being realized across varied groups of all teachers, and all their students. In other words, success stories will not be limited to single sites. Quality instructional tools will demonstrate relatively consistent results despite the wide real-world variability among teachers, students, and implementation. Results will be repeatable over multiple years, across student cohorts, and at a large number of sites.

3) Rapid scalability: The program design will enable straightforward implementation and earn an element of passionate teacher embrace.

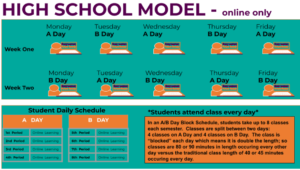

Both are vital to broad scale-up. In order to scale rapidly, use of the content will not require significant shake-up of existing school setups: it will work with the existing people, facilities, time and resources. The startup/sustaining cost all-in, including training time, will fit within instructional materials and professional development budgets and time. Put another way, to achieve broad reach and rapid scale-up, the program will show it can reach millions of students in just a few years, limited only by district decision-making.

So, please, let’s discount signs of “success” which are marketing hype, or this quarter’s popularity contest winners, until proven out. Let’s also not get confused crediting learning success mostly to platforms, or back-end features, or school structures. What is relevant to learning are of course the impactful moments of instructional interactions between student, content, and teacher – interactions that will become eminently more successful as better instructional content becomes available.

0 Comments

Leave a Comment

Your email address will not be published. All fields are required.