How did we get here?

Artificial Intelligence has been a part of the common lexicon for decades and the product announcements have finally caught up to the hype. What is the arc of this journey?

Objects in the windshield are closer than they appear. Artificial Intelligence (AI) –the notion that machines could exhibit human intelligence–was conceived in the 1950s but it became a really big deal with the recent explosion of big data powered by cheap computing and storage and lots of devices, sensors, cameras and RFID tags (the Internet of Things).

Many of the smart machine advances in 2017 were a result of deep learning, a subset of machine learning that uses layers of neural networks–computer models roughly based on the human brain. When combined with big data, machine learning informs enabling technologies and creates new capabilities. For example, to AI and big data add:

- Robotics and you have custom manufacturing (often called industry 4.0);

- Cameras and sensor package, and you have self-driving cars;

- Sensors and bioinformatic maps, and you have precision medicine;

- CRISPR, and you have genomic editing; and

- Chatbots, and you have personalized health monitoring, retail and music.

The profound change is that rather than hard-coding a solution, you can feed large datasets into a machine learning application and it can learn how to perform a task better and quicker than expert humans. The combination of machine learning and big data has resulted in impressive accomplishments in the last 18 months–in addition to beating the world champion Go player (after analyzing millions of professional games and playing itself millions of times), also playing dozens of Atari video games better than humans and reading and comprehending news articles.

In the early 2000s, Bill Gates aimed Microsoft researchers at speech recognition. By the end of the decade, they were making progress with deep stacks of neural networks. In the last few years, the use of deep learning algorithms has produced accurate speech and image recognition–in some cases better than experts. AI routinely beats radiologists at tumor detection.

Five Big Ideas in AI

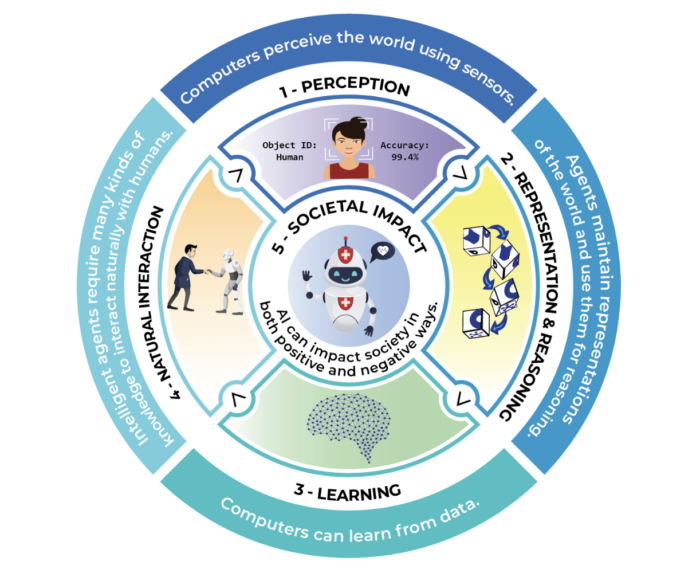

Back in 2019 AI4K12 released their framework of the “Five Big Ideas in AI” is an excellent example where we can find a means for discussing the growing edges of AI in our field.

What do we teach the children?

- Perception: Computers perceive the world using sensors. Perception is the process of extracting meaning from sensory signals. Making computers “see” and “hear” well enough for practical use is one of the most significant achievements of AI to date.

- Representation and Reasoning: Agents maintain representations of the world and use them for reasoning. Representation is one of the fundamental problems of intelligence, both natural and artificial. Computers construct representations using data structures, and these representations support reasoning algorithms that derive new information from what is already known. While AI agents can reason about very complex problems, they do not think the way a human does.

- Learning: Computers can learn from data. Machine learning is a kind of statistical inference that finds patterns in data. Many areas of AI have progressed significantly in recent years thanks to learning algorithms that create new representations. For the approach to succeed, tremendous amounts of data are required. This “training data” must usually be supplied by people, but is sometimes acquired by the machine itself.

- Natural Interaction: Intelligent agents require many kinds of knowledge to interact naturally with humans. Agents must be able to converse in human languages, recognize facial expressions and emotions, and draw upon knowledge of culture and social conventions to infer intentions from observed behavior. All of these are difficult problems. Today’s AI systems can use language to a limited extent, but lack the general reasoning and conversational capabilities of even a child.

- Societal Impact: AI can impact society in both positive and negative ways. AI technologies are changing the ways we work, travel, communicate, and care for each other. But we must be mindful of the harms that can potentially occur. For example, biases in the data used to train an AI system could lead to some people being less well served than others. Thus, it is important to discuss the impacts that AI is having on our society and develop criteria for the ethical design and deployment of AI-based systems.

In order to teach these core tenants, we will need to develop, embed and scale curriculum that helps young learners understand how to leverage and identify AI in their daily and professional lives. AI4K12 has develop a high school course sequence that seeks to do just that:

- Examples of Programs and Curriculum:

- AI Fluency: AI bootcamp

- Ready AI: Kits & competitions

- Invent XYZ: real world projects & environments

- AI4All: Education and mentorship

- AI+Ethics: Middle grades course from MIT Media Lab (~30 hours)

- AI for Oceans: Tutorial from Code.org

- AI Experiments: Experiments with Google

- Teachable Machines: An initiative from google Google

Washington Leadership Academy

Numerous schools are integrating more computational artificial intelligence literate courses. A four-year course sequence from Washington Leadership Academy is seen below.

9th

Computer Science Discovery (free intro course from Code.org)

10th

AP Computer Science Principles (free course from Code.org) as well as the beginning of 3 years of integrated math (with a emphasis on computation over calculation) and AP (or college credit) Statistics

11th

Introduction to Data Science (UCLA class used in LAUSD) or new online class from YouCubed.org

12th

College credit Python course (AP CS Java class is an option, but Python is more commonly used in data science and AI)

Additionally, we foresee a huge potential in further entrepreneurial pursuits from young people around the world. The skills barrier for building an app or site to market and sell products/services just lowered exponentially and in the years to come will continue to lower. The natural interaction element of the AI4K12 Framework will be a key piece as natural language models continue to productize and embed within pre-existing tools and platforms.

To create opportunities for both students and teachers, we should neither worship nor flee from these emerging capabilities. We should stay curious, become better informed and invest in developments that could harness AI for social good.